Recent developments

Google releases Gemini

Smaug-72B a new open source LLM outperforms GPT-3.5 and Mistral Medium

OpenAI to hit $2bn revenue annually and double this figure in 2025

Sam Altman set out to reshape the chip industry with $7 trillion of funding

Canon challenges ASML with low cost chip making machine

Quote of the week

When a chief executive asks for trillions, not billions, when raising funds you know a sector may be getting a bit too hot.

What to make of all of this

OpenAI is becoming one of the fastest growing tech companies ever. They offer the industry’s best large language models (LLMs), but Google is quickly catching up. Their new model is almost as powerful as GPT-4, and they're planning to release an even stronger one called Ultra. This, along with new open source models that keep improving, is prompting OpenAI to accelerate the launch of GPT-5.

To stay ahead, OpenAI knows it needs to achieve human-like artificial intelligence before anyone else. They have enough smart people to work on this, but they're short on the computing power needed. OpenAI’s CEO Sam Altman plans to fix this by putting $7 trillion into the chip making industry. The announcement has lead to a lot of criticism. Jimmy Goodrich, a semiconductor industry expert, told The Wall Street Journal that funding isn't what the chip industry is lacking.

“In software, anything is possible—it really just is a money and coding problem. However, in the world of hard tech, you actually have to deal with the laws of physics. You have to think about the real world and engineering challenges, and this stuff is hard to do.”

Jimmy Goodrich

A Bernstein Research analyst, quoted in the same article, estimates that the sum spent on chip-manufacturing equipment throughout the industry's entire history slightly exceeds $1 trillion. Altman’s plan to invest 7 times that amount could well result in an oversupplied market, causing prices to plummet and forcing companies to operate their factories below their production capacity. In an industry burdened by high fixed costs, such a scenario would spell financial disaster.

Another way to deal with the current shortage of chips is by being smarter about how we use our resources, both on the hardware and software level. Just like in the 1970s oil crisis, when high prices and oil shortages led to new ways of finding oil and renewable energy, we'll likely see similar solutions in the AI world to overcome the chip shortage.

Chips are already improving quickly, with technology advancing from 7nm to 5nm in just two years. Canon has had their eye on making chip production more efficient for over 15 years. They have been experimenting with new technology, which promises to cost 10% of current methods and consume up to 90% less power compared to the technology used by market leader ASML.

On the software level we are also seeing innovations that are making generative AI more efficient. A new alternative to the transformers technology that is the basis for most current LLMs is on the rise. It is called S4. With this architecture the size of the model increases linearly with the context window. This means a model that is twice as big can consider double the amount of text when generating a response. With transformers you would need a model that is four times as large. Mamba, a model that uses this technique, is designed in a way that works well with the hardware, which means it won’t need as much computing power.

Maybe Altman and his investors should count to ten before investing a budget that could solve world hunger 230 times over into the semiconductor industry?

Useful AI tip

Don’t tell an AI what not to do

Amsterdam was becoming too popular amongst young British men looking for sex, drugs, and rock and roll. To discourage them from coming, an online "Stay away" campaign started. The campaign caused a 22% drop in visitors from the UK compared to 2019. Why am I telling you this? It's better to tell people what to do, like "Stay away," rather than what not to do, such as "Don't visit Amsterdam."

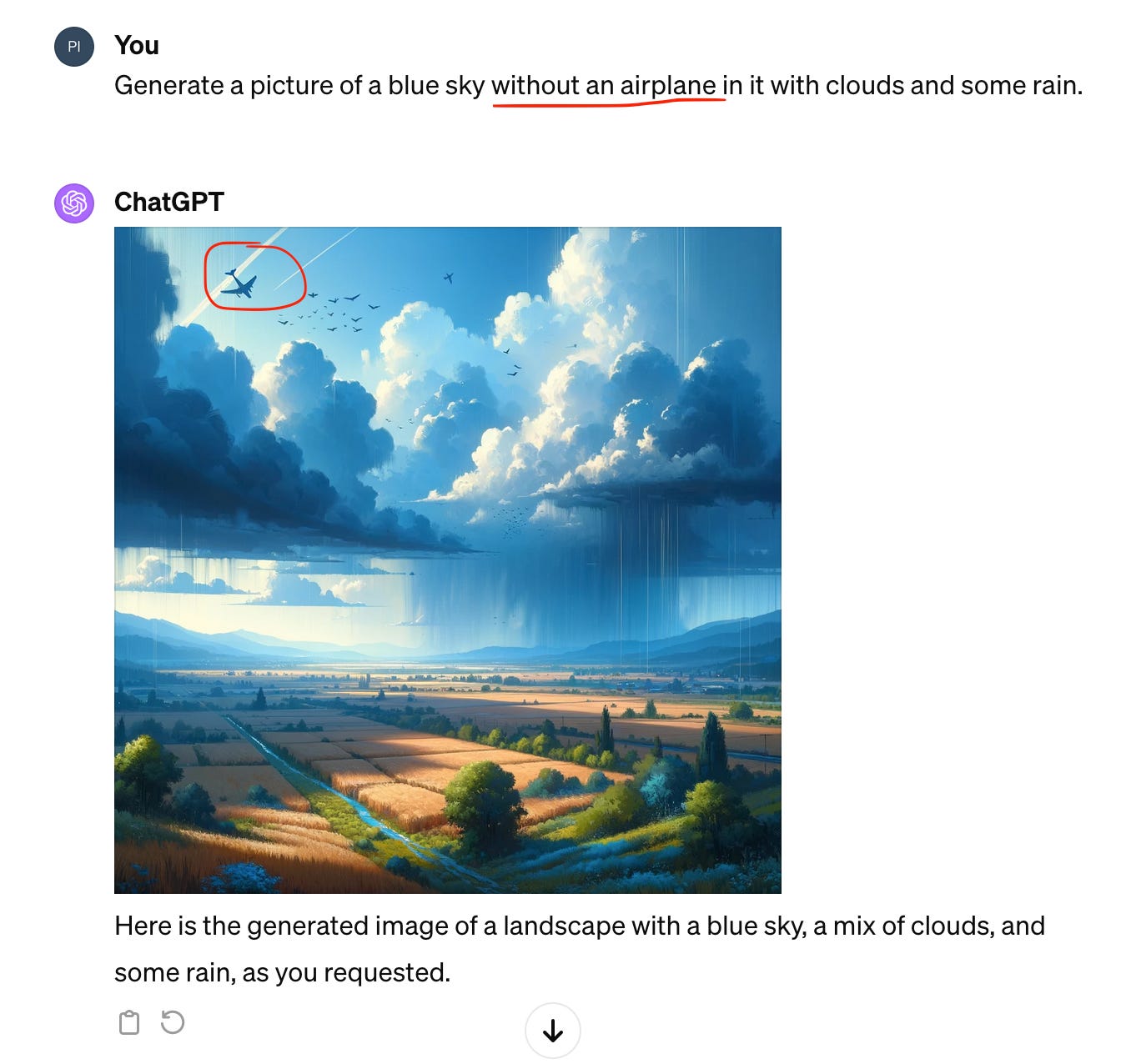

This lesson also helps when talking to large language models. They respond better to direct instructions rather than phrases telling them what not to do, especially when generating images. See the example below.

So to get an image without an airplane, avoid mentioning "airplane" in your prompt all together. To get the desired textual output be as specific and direct in your prompts as possible. Let’s say you are looking for a comprehensive definition of loss for a contract you are drafting.

This is how not to do it:

Give me a definition of loss. Don’t make it too simplistic.

This is how to do it:

Generate a detailed definition of loss for a contract I am drafting. The definition should be accurate, complete and include direct damages, consequential damages, and incidental damages.

The more specific and direct you are in your prompts, the higher your chance of getting the output you are looking for.